Natural Language Processing: How Machines Understand Language

Every time you ask a voice assistant a question, receive a translated webpage, or get flagged for a grammar mistake in a document, you’re interacting with Natural Language Processing — the branch of artificial intelligence that gives machines the ability to read, interpret, and generate human language.

NLP sits at one of the most fascinating intersections in all of technology: linguistics, statistics, and machine learning combined to solve a problem humans find trivially easy but computers have historically found extraordinarily hard. Understanding what the sentence “I saw the man with the telescope” actually means — is the speaker using the telescope, or observing a man who has one? — requires context, world knowledge, and ambiguity resolution that took decades of research to even partially replicate in software.

Today, NLP powers some of the most widely used technology on the planet. This article breaks down what it is, how it works, why it matters, and where it’s heading.

What Is Natural Language Processing?

Natural Language Processing (NLP) is the field of AI concerned with enabling computers to understand, interpret, and generate text and speech in a way that is both meaningful and useful. “Natural language” simply means the kind of language humans use naturally — English, Mandarin, Arabic, and thousands of others — as opposed to formal programming languages like Python or SQL.

NLP sits within the broader field of artificial intelligence and machine learning, but it draws heavily from computational linguistics — the scientific study of language structure — as well as statistics and, increasingly, deep learning.

The core challenge NLP tries to solve is this: human language is messy, ambiguous, context-dependent, and constantly evolving. A word like “bank” means something entirely different depending on whether you’re fishing or filing a tax return. Sarcasm, idiom, cultural reference, implication — these are things humans parse effortlessly and computers have long struggled to handle reliably.

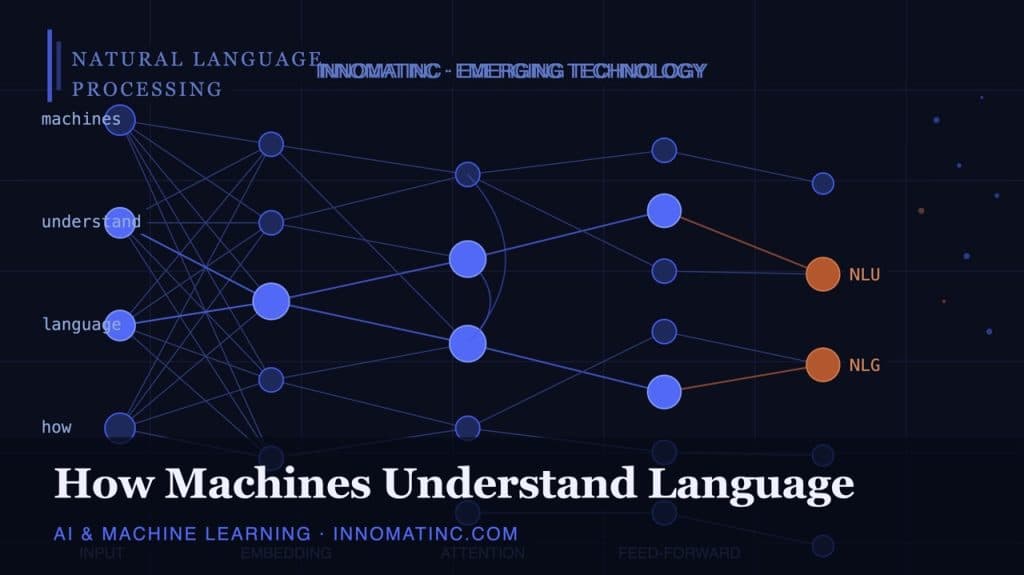

NLP vs. NLU vs. NLG

You’ll often see three related terms used in this space:

- NLP (Natural Language Processing) — the broad umbrella term covering all computational work with human language.

- NLU (Natural Language Understanding) — the subset focused specifically on comprehension: extracting meaning, intent, and structure from text or speech.

- NLG (Natural Language Generation) — the subset focused on producing human-like text or speech as output, such as writing a summary or composing a reply.

Modern large language models like GPT-4 and Claude effectively combine all three: they understand input, reason about it, and generate fluent output in response.

A Brief History: From Rules to Neural Networks

NLP did not spring into existence with ChatGPT. It has a history stretching back to the 1950s, and understanding that history explains a great deal about why modern systems work the way they do.

The Rule-Based Era (1950s–1980s)

Early NLP systems were built on hand-crafted linguistic rules. Teams of linguists and programmers would encode grammar rules, dictionaries, and syntactic patterns directly into software. If a sentence matched a known pattern, the system could parse it. If it didn’t, it failed.

These systems were brittle. Language is too varied and too creative to be fully captured by rules. They worked in narrow, controlled domains — like parsing structured sentences in an airline booking system — but collapsed under the richness of real-world text.

The Statistical Era (1990s–2010s)

The shift came when researchers began treating language as a statistical problem rather than a rule-following one. Instead of encoding grammar rules by hand, systems were trained on large datasets of text to learn the statistical patterns of how words and phrases co-occur.

This era produced practical, widely-deployed tools: statistical machine translation (the early versions of Google Translate), spam filters, sentiment analysis tools, and early question-answering systems. Performance improved dramatically, though the systems still struggled with nuance and long-range context.

The Deep Learning Era (2013–present)

The arrival of neural network-based approaches transformed NLP. Two developments were particularly important:

Word embeddings (2013): Research from Google introduced word2vec, a technique for representing words as numerical vectors in a high-dimensional space where semantic relationships are preserved geometrically. Words with similar meanings cluster together; relationships like “king minus man plus woman equals queen” emerge naturally from the training data. For the first time, machines had a way to represent the meaning of words, not just their surface form.

The Transformer architecture (2017): A landmark paper from Google — Attention Is All You Need — introduced the Transformer model, which uses a mechanism called self-attention to weigh the relevance of every word in a sentence against every other word simultaneously. This allowed models to capture long-range dependencies in text far more effectively than previous approaches. Nearly every major NLP system built since 2018 — BERT, GPT, T5, LLaMA — is based on the Transformer architecture.

How NLP Actually Works: The Core Techniques

A modern NLP pipeline involves several distinct processing stages. Understanding these gives you a clearer picture of what’s happening “under the hood” when a machine reads text.

Tokenisation

Before a model can process text, the text must be broken into units called tokens. A token is roughly — but not exactly — a word. “Running” might be one token; “unbelievable” might be split into “un”, “believ”, and “able” depending on the tokeniser. Punctuation, spaces, and special characters are all handled according to the tokenisation scheme.

This step matters more than it sounds. How a model tokenises input directly affects how it handles rare words, different languages, numbers, and code.

Part-of-Speech Tagging

POS tagging assigns a grammatical label to each token: noun, verb, adjective, preposition, and so on. Even this seemingly simple task has edge cases — “run” can be a noun or a verb depending on context — which is why statistical and neural approaches outperform rule-based ones here.

Named Entity Recognition (NER)

NER identifies and classifies named entities in text: people, organisations, locations, dates, monetary values. A sentence like “Elon Musk announced a new Tesla factory in Mexico” would have “Elon Musk” tagged as a person, “Tesla” as an organisation, and “Mexico” as a location. NER is fundamental to information extraction, news analysis, and many business intelligence applications.

Dependency Parsing

Parsing analyses the grammatical structure of a sentence and identifies the relationships between words — which word is the subject, which is the object, which adjectives modify which nouns. This structural understanding is critical for any task that requires genuine comprehension rather than pattern matching.

Sentiment Analysis

Sentiment analysis classifies the emotional tone of text: positive, negative, neutral, or more granular emotion labels. Widely used in brand monitoring, product review analysis, and social media intelligence. Modern approaches can detect nuance like mixed sentiment (“the food was excellent but the service was terrible”) and even sarcasm, though the latter remains a genuine research challenge.

Semantic Similarity and Embeddings

Using vector representations of text, NLP systems can calculate how semantically similar two pieces of text are — even when they use completely different words. “The vehicle failed to start” and “the car wouldn’t turn on” would score high on semantic similarity despite sharing no key words. This is central to search engines, recommendation systems, and retrieval-augmented generation (RAG) systems used in modern AI assistants.

Machine Translation

Neural machine translation — now the standard approach used by Google Translate, DeepL, and others — uses encoder-decoder Transformer architectures trained on billions of sentence pairs across language pairs. Quality has improved to the point where professional translators now routinely use MT as a first pass and edit from there, rather than translating from scratch.

The Transformer and the Rise of Large Language Models

No discussion of modern NLP is complete without addressing large language models (LLMs). If you want to understand why ChatGPT, Gemini, and Claude feel qualitatively different from earlier AI assistants, the Transformer architecture and the training approach that powers LLMs is the answer.

How a Transformer Processes Language

The core innovation of the Transformer is the self-attention mechanism. When processing the word “it” in a sentence, a self-attention layer can look at every other word in the context window and determine which ones are most relevant for resolving what “it” refers to. This happens in parallel for all words simultaneously — which is both more accurate and far more computationally efficient than processing words sequentially.

Stacking many layers of self-attention on top of each other, trained on enormous corpora of text, produces models that develop rich internal representations of language structure, factual knowledge, and reasoning patterns.

Pre-training and Fine-tuning

LLMs are trained in two stages. In pre-training, the model is trained on massive datasets — hundreds of billions to trillions of tokens of text from the internet, books, and other sources — on a simple self-supervised task: predict the next token. Despite the simplicity of the task, training at sufficient scale produces models with broad language understanding and a surprising range of reasoning capabilities.

In fine-tuning, the pre-trained model is further trained on curated datasets for specific tasks or behaviours — following instructions, being helpful and safe, answering questions in a particular style. This is how a raw language model becomes a useful assistant.

This paradigm — train once at massive scale, then fine-tune for specific applications — has dramatically lowered the cost of deploying NLP capabilities across a huge range of tasks. For a deeper look at how this fits into the broader AI landscape, see our guide to AI and machine learning.

Real-World Applications of NLP

NLP is not a theoretical curiosity. It is embedded in tools and systems used by billions of people every day. Here are the major application domains:

Search Engines

Modern search is fundamentally an NLP problem. Google’s BERT update (2019) was one of the most significant changes in search history precisely because it brought Transformer-based language understanding to query processing. Search engines now understand the intent behind a query — not just the keywords in it. A search for “do I need a visa to visit Japan from Australia” is understood as a specific practical question, not a bag of keywords.

Virtual Assistants and Chatbots

Siri, Alexa, Google Assistant, and enterprise chatbots all rely on NLP pipelines — specifically intent recognition (what does the user want?) and entity extraction (what are the key details?) — to convert spoken or typed language into structured commands that software can execute. LLM-powered assistants go further, handling open-ended conversation and complex reasoning.

Machine Translation

Real-time translation of web pages, documents, conversations, and subtitles — now routine technology — is powered by neural MT systems. The quality gap between professional human translation and machine translation has narrowed significantly for major language pairs, though nuance and specialised domains remain areas where human expertise adds clear value.

Content Moderation

Platforms handling billions of posts per day rely on NLP to detect hate speech, misinformation, spam, and harmful content at scale. This is one of the harder NLP applications — it requires cultural context, understanding of current events, and sensitivity to language that evolves quickly.

Healthcare and Clinical NLP

Clinical notes, discharge summaries, medical literature, and patient records are overwhelmingly unstructured text. NLP systems that can extract diagnoses, medications, symptoms, and outcomes from clinical text are enabling new approaches to population health analysis, drug discovery, and clinical decision support. This is one of the highest-impact application areas for the technology.

Legal and Financial Document Analysis

Contract review, due diligence, regulatory filings, earnings call analysis — all domains where NLP is reducing the time required for high-volume document processing. A task that might take a team of lawyers days to review manually can be processed in minutes by a well-tuned NLP system.

Code Generation and Analysis

GitHub Copilot, Amazon CodeWhisperer, and similar tools apply LLM capabilities to programming languages. Because code has syntax and semantics like natural language (and because LLMs are trained on vast amounts of public code), these tools can autocomplete functions, explain code, find bugs, and translate between programming languages with genuine practical utility.

The Current Frontiers: What NLP Still Can’t Do Well

Honest coverage of NLP requires acknowledging its limitations — particularly given how capable modern systems appear on the surface.

Hallucination and Factual Reliability

LLMs generate text by predicting plausible continuations, not by retrieving verified facts. This means they can produce fluent, confident text that is factually wrong — a phenomenon called hallucination. For any application where factual accuracy is critical (medical advice, legal guidance, financial information), this is a significant limitation that retrieval-augmented approaches and improved training methods are actively trying to address.

Reasoning and Multi-Step Logic

LLMs can appear to reason, but their reasoning is more accurately described as pattern matching at a high level of abstraction. They struggle with novel logical puzzles, multi-step arithmetic, and tasks that require genuine causal understanding rather than statistical association. Techniques like chain-of-thought prompting improve performance significantly, but the gap between LLM “reasoning” and genuine logical deduction remains an active research question.

Long-Context Understanding

Despite context windows now extending to hundreds of thousands of tokens, research consistently shows that model performance degrades for information buried deep in long contexts. The ability to maintain coherent understanding and retrieval across very long documents is still being actively improved.

Low-Resource Languages

NLP performance is dramatically better for languages with large amounts of training data — English, Mandarin, Spanish — than for the thousands of languages with limited digital text corpora. The technology remains linguistically unequal in ways that have real implications for global access.

NLP and the Broader AI Landscape

NLP doesn’t exist in isolation. It increasingly intersects with other branches of AI in ways that are reshaping what’s possible:

- Multimodal AI — systems like GPT-4o combine language understanding with vision, allowing models to reason about images, charts, and video alongside text. The boundaries between NLP and computer vision are dissolving.

- AI Agents — LLMs are increasingly used as the reasoning engine in autonomous AI agents that can browse the web, write and execute code, and complete multi-step tasks. NLP is the interface through which these agents receive instructions and report results.

- Generative AI — the explosion of generative content tools — from AI writing assistants to synthetic media — is largely built on NLP foundations. Understanding NLP is essential context for understanding what generative AI is and how it works.

Key Takeaways

- Natural Language Processing is the branch of AI that enables machines to read, understand, and generate human language.

- Modern NLP is built on the Transformer architecture, which uses self-attention to process language with far greater contextual awareness than earlier approaches.

- Large language models are pre-trained on vast text corpora and fine-tuned for specific applications — a paradigm that has made high-quality NLP accessible across a huge range of use cases.

- Real-world NLP applications include search, translation, virtual assistants, healthcare, legal analysis, and code generation.

- Current limitations — hallucination, logical reasoning, low-resource language performance — are active research areas, not permanent ceilings.

- NLP is foundational to understanding the broader AI landscape, from generative AI to autonomous AI agents.